Almost everyone gets into debt from time to time, and it’s not always a big deal. But as you approach retirement, you want to get as much out of debt as possible. With fewer payments to worry about, you can further expand your existing savings.

Almost everyone gets into debt from time to time, and it’s not always a big deal. But as you approach retirement, you want to get as much out of debt as possible. With fewer payments to worry about, you can further expand your existing savings.

But getting rid of debt, especially high-interest debt, is easier said than done. If you’re struggling to get your finances under control, these four tips might help.

Keep in mind that everyone’s debt repayment strategy will be a little different, depending on what they owe and how close they are to retirement. But don’t make the mistake of thinking it will get easier over time. The sooner you start paying off your debts, the better off you will be in the long run.

Subscribe to our newsletter: The Daily Money delivers our best personal finance stories to your inbox

1. Focus on high-interest debt first

You should always prioritize debts with the highest interest rates first. If you have payday loans or credit card debt, this is the best place to start. Don’t worry so much about mortgages or other low interest debt. Keep making your payments on these, but don’t put any extra money into them until your high-interest debt is paid off.

The debt avalanche method is a popular strategy for paying off credit card debt across multiple cards. First, you make the minimum payment on all your cards each month. Then you put any remaining money on your debt with the highest interest rate. When you have paid off that debt, you move on to the debt with the next highest interest rate, and so on.

You can also try using a balance transfer card or a personal loan. Balance transfer cards temporarily halt your balance growth, so they’re a good choice if you’re sure you can pay off what you owe within the 0% introductory period. Otherwise, a personal loan might be a better option. These give you a predictable monthly payment, so you don’t have to worry about your balance growing.

The ripple effect of inflation: High debt, a risk for low-income households

2. Look for other ways to make more money

Bringing in more money can help you pay off your debt faster. You might be working overtime at your current job or starting a side hustle. Or you can use windfall earnings, like year-end bonuses, pay raises, and birthday money, for debt repayment.

Again, if you have high-interest debt, focus on that first, and you might even want to put your retirement savings on hold for a while. You’re probably paying more interest on your credit card in a year than you’ll earn investing your money, so it makes more sense to spend all your money on that debt first. Then, when it’s paid off, you can save for retirement and work on your other types of debt at the same time.

3. Don’t Touch Your Retirement Savings Sooner

You may be tempted to withdraw some of your retirement savings early to pay off your debts, but this is actually counterproductive. For one thing, you’ll pay a 10% early withdrawal penalty if you take money out of most retirement accounts before you turn 59½ – and that’s on top of the taxes you’ll have to pay if the money comes from a tax. – deferred account.

You will also significantly reduce your retirement savings. When you start saving again, you will need to save a lot more per month to retire on time. You’d better leave your savings alone so they can grow until retirement.

“I have exhausted my savings”: Inflation is pushing Americans to turn to loans and credit cards to cope

4. Delay retirement

When all else fails, you can always delay your retirement to give yourself more time to save and pay off debt. It’s not the ideal solution, but it’s better to run out of money early. You could also slowly transition into retirement, perhaps going part-time for a while before quitting for good.

Should I still retire in this market? How to make a smart decision

Motley Fool’s Offer

10 stocks we like better than Walmart: When our award-winning team of analysts have investment advice, it can pay to listen. After all, the newsletter they’ve been putting out for over a decade, Motley Fool Equity Advisortripled the market.*

They have just revealed what they believe to be the ten best stocks for investors to buy now…and Walmart wasn’t one of them! That’s right – they think these 10 stocks are even better buys.

View all 10 stocks

The Motley Fool has a disclosure policy.

The Motley Fool is a USA TODAY content partner offering financial news, analysis and commentary designed to help people take control of their financial lives. Its content is produced independently of USA TODAY.

]]>Los Angeles, USA,-According to the report of the verified market report, the global Flicker Noise Measurement System market is expected to grow at a tremendous rate over the coming years. Titled “Global Flicker Noise Measurement System Market Size and Forecast 2022-2029”, this report provides an in-depth look at the future of the global Flicker Noise Measurement System Market. Increased demand for smart technologies and increased construction of skyscrapers and tall commercial buildings are expected to contribute significantly to the growth of the global flicker noise measurement system market.

A comprehensive study of the global Flicker Noise Measurement System Market is carried out by the analysts of this report, considering key factors such as drivers, challenges, recent trends, opportunities, developments and competitive landscape . This report provides a clear understanding of the current and future scenarios of the global Flicker Noise Measurement System industry. Research techniques such as pestle and Porter’s five forces analysis have been deployed by the researchers. It also provided accurate data on Flicker Noise Measurement System production, capacity, price, cost, margin, and revenue, allowing players to gain a clear understanding of overall market conditions existing and future.

Get | Download a sample copy with table of contents, graphics and list of [email protected] https://www.verifiedmarketreports.com/download-sample/?rid=45340

Main Drivers and Obstacles

The high-impact factors and renderers have been studied in this report to help readers understand the overall development. Additionally, the report includes constraints and challenges that can be stumbling blocks in the players’ path. This will help users make informed, meticulous business-related decisions. The experts also focused on the upcoming trade prospects.

Sector outlook

The key segments including types and applications have been detailed in this report. Verified market report consultants have studied all segments and used historical data to provide market size. They also discussed the growth opportunities the segment could represent in the future. The study provides production and revenue data by type and application over the past period (2016-2021) and forecast period (2022-2029).

Major Players Covered in Flicker Noise Measurement System Markets:

- key sight

- ProPlus Design Solutions

- AdMOS

- Platform design automation

Global Flicker Noise Measurement System Market Segmentation:

Flicker Noise Measurement System Market Split By Type:

Flicker Noise Measurement System Market Split By Application:

- Semiconductor company

- research Institute

- Others

Regional Market Analysis Flicker Noise Measurement System can be represented as follows:

This part of the report assesses key regional and country-level markets on the basis of market size by type and application, key players, and market forecast.

Based on geography, the global flicker noise measurement system market has been segmented as follows:

-

- North America includes the United States, Canada and Mexico

-

- Europe includes Germany, France, UK, Italy, Spain

-

- South America includes Colombia, Argentina, Nigeria and Chile

-

- Asia Pacific includes Japan, China, Korea, India, Saudi Arabia and Southeast Asia

Get | Discount on the purchase of this report @ https://www.verifiedmarketreports.com/ask-for-discount/?rid=45340

Scope of the Flicker Noise Measurement System Market Report

Industry Overview: The first section of the research study covers a global Flicker Noise Measurement System market overview, market status and outlook, and product scope. Additionally, it provides highlights of major segments of the global Flicker Noise Measurement System market i.e., region, type, and application segments.

Competitive analysis:This report throws light on significant mergers and acquisitions, business expansion, product or service differences, market concentration, global Flicker Noise Measurement System Market competitive status and market size by actor.

Company profiles and key data:This section covers the companies featuring leading players of the global Flicker Noise Measurement System market based on revenue, products, activities, and other factors mentioned above.

Market Size by Type and Application:Besides providing an in-depth analysis of the global Flicker Noise Measurement System market size by type and application, this section provides research on key end-users or consumers and potential applications.

North American market: This report depicts the changing size of the North America market by application and player.

European market: This section of the report shows how the size of the European market will evolve over the next few years.

Chinese market: It provides analysis of the Chinese market and its size for all years of the forecast period.

Rest of the Asia-Pacific market: The rest of the Asia-Pacific market is here analyzed in quite detail on the basis of applications and players.

Central and South America Market: The report illustrates changes in Central and South America market size by players and applications.

Mea Market: This section shows how the Mea market size changes over the forecast period.

Market dynamics: This report covers the drivers, restraints, challenges, trends, and opportunities of the global Flicker Noise Measurement System market. This section also includes Porter’s analysis of the five forces.

Findings and Conclusions:It provides strong recommendations for new and established players to secure a position of strength in the global Flicker Noise Measurement System market.

Methodology and data sources:This section includes author lists, disclaimers, research approaches, and data sources.

The main questions answered

What will be the size and average annual size of the global Flicker Noise Measurement System market in the next five years?

Which sectors will take the lead in the global Flicker Noise Measurement System market?

What is the average manufacturing cost?

What are the key business tactics adopted by the key players in the global Flicker Noise Measurement System market?

Which region will gain the lion’s share in the global Flicker Noise Measurement System market?

Which companies will dominate the global Flicker Noise Measurement System market?

Research Methodology

Quality research uses reliable primary and secondary research sources to compile the reports. It also relies on the latest research techniques to prepare very detailed and precise research studies like this one. Use data triangulation, top-down and bottom-up approaches, and advanced research processes to deliver comprehensive, industry-leading market research reports.

For more information or query or customization before buying, visit @ https://www.verifiedmarketreports.com/product/global-flicker-noise-measurement-system-market-2019-by-manufacturers-regions-type-and-application-forecast-to-2024/

Visualize the Flicker Noise Measurement System Market Using Verified Market Intelligence:-

Verified Market Intelligence is our BI platform for market narrative storytelling. VMI offers in-depth forecast trends and accurate insights on over 20,000 emerging and niche markets, helping you make critical revenue-impacting decisions for a bright future.

VMI provides a global overview and competitive landscape with respect to region, country and segment, as well as key players in your market. Present your market report and results with an integrated presentation function that saves you more than 70% of your time and resources for presentations to investors, sales and marketing, R&D and product development. products. VMI enables data delivery in Excel and interactive PDF formats with over 15+ key market indicators for your market.

Visualize the Flicker Noise Measurement System Market Using VMI@ https://www.verifiedmarketresearch.com/vmintelligence/

About Us: Verified Market Reports

Verified Market Reports is a leading global research and advisory company serving over 5000 global clients. We provide advanced analytical research solutions while delivering information-enriched research studies.

We also provide insight into the strategic and growth analytics and data needed to achieve business goals and critical revenue decisions.

Our 250 analysts and SMEs offer a high level of expertise in data collection and governance using industry techniques to collect and analyze data on over 25,000 high impact and niche markets. Our analysts are trained to combine modern data collection techniques, superior research methodology, expertise and years of collective experience to produce informative and accurate research.

Our research spans a multitude of industries, including energy, technology, manufacturing and construction, chemicals and materials, food and beverage, and more. Having served many Fortune 2000 organizations, we bring a wealth of reliable experience that covers all kinds of research needs.

Contact us:

Mr. Edwyne Fernandes

USA: +1 (650)-781-4080

UK: +44 (753)-715-0008

APAC: +61 (488)-85-9400

US Toll Free: +1 (800)-782-1768

E-mail: [email protected]

Website: – https://www.verifiedmarketreports.com/

]]>ING is a global financial institution with a strong European base, offering banking services through its operating company ING Bank. ING Bank’s goal is to empower people to stay ahead in life and in business. ING Bank’s more than 57,000 employees provide retail and wholesale banking services to customers in over 40 countries.

ING Group shares are listed on the Amsterdam Stock Exchange (INGA NA, INGA.AS), Brussels Stock Exchange and the New York Stock Exchange (ADR: ING US, ING.N).

Sustainability is an integral part of ING’s strategy, as evidenced by ING’s leading position in sector benchmarks. ING’s ESG rating by MSCI was confirmed to be ‘AA’ in December 2021. ING Group shares are included in the main sustainability index and environmental, social and governance (ESG) index products of leading providers STOXX, Morningstar and FTSE Russell. In January 2021, ING received an ESG assessment score of 83 (“strong”) from S&P Global Ratings.

IMPORTANT LEGAL INFORMATION

Elements of this press release contain or may contain information about ING Groep NV and/or ING Bank NV within the meaning of Article 7, paragraphs 1 to 4, of EU Regulation No 596/2014.

Some of the statements contained herein are not historical facts, including, without limitation, certain statements made of future expectations and other forward-looking statements that are based on management’s current beliefs and assumptions and involve known and unknown risks and uncertainties that could cause actual results, performance or events to differ materially from those expressed or implied in such statements. Actual results, performance or events may differ materially from those referred to in these statements due to a number of factors, including, without limitation: (1) changes in general economic conditions and customer behavior , in particular economic conditions in ING’s principal markets, including changes affecting exchange rates and the regional and global economic impact of Russia’s invasion of Ukraine and related international response measures (2) the effects of the Covid-19 pandemic and related response measures, including lockdowns and travel restrictions, on the economic conditions of the countries in which ING operates, on ING’s business and operations and on employees , customers and counterparties of ING (3) changes affecting interest rate levels (4) any failure of a major market player and associated market disruptions (5) Changes in financial market performance, including in Europe and developing markets (6) Fiscal uncertainty in Europe and the United States (7) Stopping or variations in “benchmark” indices (8) inflation and deflation in our major markets (9) changes in conditions in the credit and capital markets generally, including changes in the creditworthiness of borrowers and counterparties (10) failures of banks within the jurisdiction state compensation schemes (11) failure to comply with or change laws and regulations, including those relating to financial services, financial economic crimes and tax laws, and their interpretation and application (12) geopolitical risks , political and political instabilities and actions of governments and regulatory authorities, including in the context of Russia’s invasion of Ukraine and international response measures related (13) legal and regulatory risks in certain countries with less developed legal and regulatory frameworks (14) prudential supervision and regulation, including with respect to stress tests and regulatory restrictions on dividends and distributions (also among members of the group) (15) the regulatory consequences of the withdrawal of the United Kingdom from the European Union, including authorizations and equivalence decisions (16) the ability of ING to meet minimum capital and other requirements prudential regulatory (17) changes in regulation of the US commodities and derivatives business of ING and its clients (18) application of bank recovery and resolution regimes, including write-down and conversion powers relating to our securities (19) the outcome of current and future litigation, enforcement proceedings, investigations or other regulatory actions , including complaints from customers or stakeholders who believe they have been deceived or treated unfairly, and other conduct issues (20) changes in tax laws and regulations and risks of non-compliance or investigation in connection with tax laws, including including FATCA (21) operational and IT risks, such as system disruptions or failures, security breaches, cyber-attacks, human error, changes in operational practices or inadequate controls, including in relation to third parties we do business with (22) cybercrime risks and challenges, including the effects of cyberattacks and changes in cybersecurity and data privacy laws and regulations (23) changes in competitive factors general, including the ability to increase or maintain our market share (24) inability to protect our intellectual property and infringement claims by third parties (25) inability of counterparties to meet their financial obligations or ability to enforce rights against such counterparties (26) changes in credit ratings (27) activities, operations, risks and regulatory, reputational, transition and other challenges related to climate change and ESG issues (28) inability to attract and retain key personnel (29) future defined benefit pension plan obligations (30) inability to manage business risks, including in relation to the use of models, the use of derivatives or the maintenance of appropriate policies and guidelines (31) changes in capital and credit markets, including interbank funding, as well as customer deposits, which provide liquidity and capital to fund our operations, and (32) other risks and uncertainties detailed in ns the latest annual report of ING Groep NV (including the risk factors contained therein) and the most recent information from ING, including press releases, which are available on www.ING.com.

This document may contain inactive text addresses to websites operated by us and third parties. Reference to such websites is made for informational purposes only and information found on such websites is not incorporated by reference herein. ING makes no representations or warranties as to the accuracy or completeness of the information found on websites operated by third parties, and assumes no liability in respect thereof. ING expressly disclaims any responsibility for any information found on websites operated by third parties. ING cannot guarantee that websites operated by third parties will remain available after the publication of this document, or that any information found on these websites will not change after the filing of this document. Many of these factors are beyond ING’s control.

Any forward-looking statement made by or on behalf of ING speaks only as of the date on which it is made, and ING undertakes no obligation to publicly update or revise any forward-looking statement, whether as a result of new information or for any other reason.

This document does not constitute an offer to sell or a solicitation of an offer to buy any securities in the United States or any other jurisdiction.

Amrita University, INCOIS started an initiative called Tsunami Ready Program in Alappad to develop tsunami preparedness.

Amrita Vishwa Vidyapeetham University signs agreement with INCOIS.

NEW DELHI: Amrita Vishwa Vidyapeetham has signed an agreement with the Indian National Center for Ocean Information Services (INCOIS) to equip coastal communities with early warning solutions for natural disasters and disaster preparedness. The five years OK addresses “community resilience, risk and disaster preparedness, joint research and development, and collaborative courses,” according to a statement from Amrita University.

As part of this agreement, INCOIS and Amrita University have launched a performance-based community initiative called Tsunami Ready Programme, by the Intergovernmental Oceanographic Commission (IOC) of UNESCO in Alappad Grama Panchayat with the aim of developing tsunami preparedness. Alappad was one of the hardest hit locations during the 2004 Indian Ocean tsunami. The deal includes academic, research and capacity building initiatives of INCOIS and Amrita University developing an effective response to disasters.

Read also | CA Foundation, Inter, Final: What do ICAI’s curriculum reforms mean for students?

The Tsunami Ready program launched by INCOIS in collaboration with Amrita Vishwa Vidyapeetham seeks to build the resilience of coastal communities by raising awareness and preparing strategies. As part of the program, a meeting was held with parish members, the President of the Panchayat, members of the Alappad community and Amrita teachers and students. INCOIS aims to extend the program to neighboring coastal regions.

“INCOIS is pleased to collaborate with Amrita Vishwa Vidyapeetham. This MoU will strengthen collaborative research between the academic and scientific community, thereby improving the reach and accessibility of INCOIS’ operational ocean forecasts for the coastal population. In addition, the Tsunami-Ready Community Recognition Program offered under this MoU will build the capacity of coastal communities to prepare for and respond effectively to tsunamis and other ocean-related hazards,” Srinivasa said. Kumar, Director of INCOIS.

Read also | RBSE 10th Result 2022 Tomorrow, Rajasthan Board Confirms

Follow us for the latest education news on colleges and universities, admission, courses, exams, schools, research, NEP and education policies and more.

To contact us, email us at [email protected].

]]>

(Kailey Hagen)

But getting rid of debt, especially high-interest debt, is easier said than done. If you’re struggling to get your finances under control, these four tips might help.

Image source: Getty Images.

1. Focus on high-interest debt first

You should always prioritize debts with the highest interest rates first. If you have payday loans or credit card debt, this is the best place to start. Don’t worry so much about mortgages or other low interest debt. Keep making your payments on these, but don’t put any extra money into them until your high-interest debt is paid off.

The debt avalanche method is a popular strategy for paying off credit card debt across multiple cards. First, you make the minimum payment on all your cards each month. Then you put any remaining money on your debt with the highest interest rate. When you have paid off that debt, you move on to the debt with the next highest interest rate, and so on.

People also read…

You can also try using a balance transfer card or a personal loan. Balance transfer cards temporarily halt your balance growth, so they’re a good choice if you’re sure you can pay off what you owe within the 0% introductory period. Otherwise, a personal loan might be a better option. These give you a predictable monthly payment, so you don’t have to worry about your balance growing.

2. Look for other ways to make more money

Bringing in more money can help you pay off your debt faster. You might be working overtime at your current job or starting a side hustle. Or you can use windfall earnings, like year-end bonuses, pay raises, and birthday money, for debt repayment.

Again, if you have high-interest debt, focus on that first, and you might even want to put your retirement savings on hold for a while. You’re probably paying more interest on your credit card in a year than you’ll earn investing your money, so it makes more sense to spend all your money on that debt first. Then, when it’s paid off, you can save for retirement and work on your other types of debt at the same time.

3. Don’t Touch Your Retirement Savings Sooner

You may be tempted to withdraw some of your retirement savings early to pay off your debts, but this is actually counterproductive. For one thing, you’ll pay a 10% early withdrawal penalty if you take money out of most retirement accounts before you turn 59½ – and that’s on top of the taxes you’ll have to pay if the money comes from a tax. – deferred account.

You will also significantly reduce your retirement savings. When you start saving again, you will need to save a lot more per month to retire on time. You’d better leave your savings alone so they can grow until retirement.

4. Delay retirement

When all else fails, you can always delay your retirement to give yourself more time to save and pay off debt. It’s not the ideal solution, but it’s better to run out of money early. You could also slowly transition into retirement, perhaps going part-time for a while before quitting for good.

Everyone’s debt repayment strategy will be a little different, depending on what they owe and how close they are to retirement. But don’t make the mistake of thinking it will get easier over time. The sooner you start paying off your debts, the better off you will be in the long run.

10 stocks we like better than Walmart

When our award-winning team of analysts have investment advice, it can pay to listen. After all, the newsletter they’ve been putting out for over a decade, Motley Fool Equity Advisortripled the market.*

They have just revealed what they believe to be the ten best stocks for investors to buy now…and Walmart wasn’t one of them! That’s right – they think these 10 stocks are even better buys.

Equity Advisor Returns 2/14/21

The Motley Fool has a disclosure policy.

Persistence

SNIA held its Persistent Memory and Computational Storage Summit, virtual this year, like last year. The Summit explored some of the latest developments in these areas. Let’s explore some of the ideas from this virtual conference from day one.

Dr. Yang Seok, vice president of the Memory Solutions Lab at Samsung, talked about the company’s SmartSSD. He argued that computing storage devices, which offload processing from processors, can reduce power consumption and thus provide a green computing alternative. He pointed out that the energy consumption of data centers has remained stable at around 1% since 2010 (in 2020 it was 200-250 TWh per year) due to technological innovations. Nevertheless, there is a difficult step to reduce greenhouse gas emissions from data centers by 53% between 2020 and 2030.

Computer storage SSDs (CSDs) can be used to offload data from a CPU to free up the CPU for other tasks or to speed up local processes closer to the stored data. This local processing is done with less power than a CPU, can be used for data reduction operations in the storage device (to enable higher storage capacities and more efficient storage, to avoid data movement and can be part of a virtualization environment CSDs appear to be competitive and use less power for I/O-intensive tasks In addition, the computing power of CSDs increases with the number of CSDs used.

Samsung has announced its first SmartSSD in 2020 and announces that its next generation will be available soon, see figure below. The next generation will allow for greater customization of what the CSD’s processor can do, allowing its use in more applications and possibly saving power for many processing tasks.

Samsung 2nd Generation SmartSSD

Stephen Bates from Eidetic and Kim Malone from Intel talked about the new standard developments for NVMe computing. One such addition to the command set for calculation programs is the calculation namespace. An entity within an NVMe subsystem that is capable of running one or more programs, may have asymmetric access to subsystem memory, and may support a subset of all types possible programs. The conceptual image below gives an idea of how it works.

Computing namespaces for computing storage

Both device-defined and downloadable programs are supported. Device-defined programs are fixed programs provided by the manufacturer or various features implemented by the device that can be called programs such as compression or decryption. Downloadable programs are loaded into the Computer Programs namespace by the host.

Andy Rudoff from Intel provided an update on persistent memory. He talked about developments along a timeline. He said that in 2019 Intel Optane Pmem was generally available. The image below shows Intel’s approach to connecting to the memory bus with Optane Pmem.

Memory bus connection with Octane Poem

Note that Direct Memory Access (DAX) is key to this use of Optane PMem. The following image shows a timeline of PMem-related developments since 2012.

Timeline of developments related to the poems since 2012

Andy reviewed several customer use cases for Intel’s Optane PMem, including

g Oracle Exadata with PMem access via RDMA, Tencent Cloud and Baidu. He also discusses future PMem directions, especially paired with CXL. These include accelerating AI/ML and data-centric applications with temporal caching and persistent metadata storage.

Jinpyo Kim from VMware and Michael Mesnier from Intel Labs talked about computing storage in a virtualized environment, in collaboration with MinIO. Some of the featured uses included cleaning data (reading data and detecting accumulated errors) from a MinIO storage stack and a Linux filesystem. They found that doing this computation near stored data was 50% to 18 times more scalable, depending on the speed of the link (the process is read-intensive). VMware, in prototype research with UC Irvine, did a project on near storage log analysis and found an order of magnitude better query performance compared to using software alone – this capability is ported on a Samsung SmartSSD.

VMware also used NGD computing storage devices to run a Greenplum MPP database. They have done a lot of work on virtualizing CSD (they call it vCSD) on vSphere/vSAN, which makes it easier to share hardware accelerators and migrate a vCSD between compatible hosts. CSDs can be used to de-aggregate cloud-native applications and offload storage-intensive functions. The figure below shows collaborative efforts to use CSDs between MinIO, VMware, and Intel.

collaboration vmware, MinIO and intel

Meta’s Chris Petersen talked about AI memory at Meta. Meta uses AI for many applications and at scale, from the data center to the edge. Since AI workloads evolve so rapidly, they require more vertical integration, from software requirements to hardware design. A considerable portion of capacity requires high BW accelerator memory, but inference has most of its capacity at low bandwidth compared to training. Additionally, inference has a tight latency requirement. They found that a level of memory beyond HBM and DRAM can be leveraged, especially for inference.

They found that for software defined memory backed by SSDs, they had to use SCM (SSD Optane) SSDs. The use of faster SSDs (SCM) reduced the need to scale up and therefore reduce power. The figure below shows Meta’s view of memory levels required for AI applications, showing that higher CXL latency, performance and more memory/storage capacity will be required. to achieve optimized performance, cost and efficiency.

Meta AI memory levels

Arthur Sainio and Pekon Gupta of SMART Modular Technologies discussed the use of NVDIMM-N in DDR5 and CXL compatible applications. These NVDIMM-Ns include a battery backup that allows the module’s DRAM memory to be written back to flash memory in the event of a power outage. This technology is also developed for the CXL application with an NV-XMM specification of devices that have an integrated power source for backup power and operate with the standard programming model for CXL Type-3 devices. The figure below shows the form factors of these devices.

CXL NVDIMM-N Form Factors

In addition to these discussions, David Eggleston of Intuitive Cognitive Consulting moderated a panel discussion with the day’s speakers and there was a Birds of a Feather session on computer storage at the end of the day moderated by Scott Shadley of NGD and AMD’s Jason Molgaard.

Samsung, Intel, Eideticom, VMware, meta and SMART Modular gave insightful presentations on persistent memory and computational storage on day one of the SNIA 2022 Persistent Memory and Computational Storage Summit.

]]>A group of researchers from the University of Bologna and Cineca explored an experimental cluster of eight-node, 32-core RISC-V supercomputers. The demonstration showed that even a group of SiFive’s humble Freedom U740 SoCs could run supercomputer applications at relatively low power. Additionally, the cluster performed well and supported a basic high-performance computing stack.

Need for RISC-V

One of the benefits of the open source RISC-V instruction set architecture is the relative simplicity of building a highly customized RISC-V core for a particular application that will provide a very competitive balance of performance, power energy and cost. It makes RISC-V suitable for emerging applications and various high-performance computing projects that meet a particular workload. The group explored the cluster to prove that RISC-V based platforms can work for high performance computing (HPC) from a software perspective.

“Monte Cimone is not intended to achieve strong floating point performance, but it was built with the purpose of ‘priming the pipe’ and exploring the challenges of integrating a multi-node RISC-V cluster capable of delivering a production HPC stack including interconnect, storage, and power monitoring infrastructure on RISC-V hardware,” the project description (opens in a new tab) bed (via NextPlatform (opens in a new tab)).

For its experiments, the team took a ready-to-use Monte Cimone cluster (opens in a new tab) consisting of four dual-board blades in a 1U form factor built by E4, an Italian HPC company (note that E4’s Monte Cimone cluster consists of six blades). The Monte Cimone is a platform “for porting and tuning software stacks and HPC applications relevant to the RISC-V architecture”, so the choice was well justified.

Cluster

The 1U Monte Cimone machines used two SiFive Unmatched HiFive development motherboards powered by SiFive’s heterogeneous Freedom U740 multi-core SoC which incorporates four U74 cores running at up to 1.4 GHz and one S7 core using SiFive’s exclusive Mix + Match technology. the company as well as 2 MB of L2 cache. . Additionally, each platform has 16GB of DDR4-1866 memory and a 1TB NVMe SSD.

Each node also sports a Mellanox ConnectX-4 FDR 40 Gbps Host Channel Adapter (HCA), but for some reason RDMA did not work even though the Linux kernel could recognize the device driver and mount the kernel module to manage the Mellanox OFED stack. Therefore, two of the six nodes were equipped with Infiniband HCA cards with a throughput of 56 Gbps to maximize the available inter-node bandwidth and compensate for the lack of RDMA.

One of the critical parts of the experiment was porting the essential HPC services required to expose the compute-intensive workloads. The team reported that porting NFS, LDAP, and the SLURM task scheduler to RISC-V was relatively straightforward; then they installed an ExaMon plugin dedicated to data sampling, a broker for transport layer management and a database for storage.

Results

Since using a low-power cluster designed for software porting purposes for real HPC workloads doesn’t make sense, the team ran HPL and Stream benchmarks to measure GFLOPS performance and memory bandwidth. The results were mixed, however.

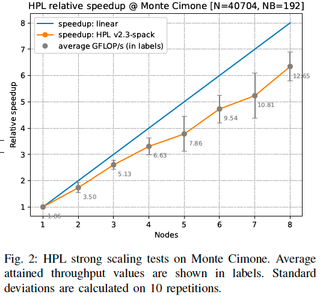

The maximum theoretical performance of SiFive’s U74 core is 1 GFLOPS, which suggests that a maximum theoretical performance of a Freedom U740 SoC should be 4 GFLOPS. Unfortunately, each node only achieved a sustained performance of 1.86 GFLOPS in HPL, which means that the maximum compute capacity of an eight-node cluster should be around 14.88 GFLOPS assuming a in perfect linear scale. The entire cluster reached a maximum sustained performance of 12.65 GFLOPS, or 85% of the extrapolated achievable peak. Meanwhile, due to the relatively poor scaling of the SoC, 12.65 GFLOPS is 39.5% of the theoretical peak of the whole machine, which might not be so bad for an experimental one if we don’t take into account the poor scaling of the U740 model.

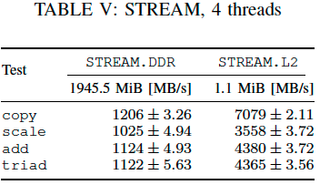

As for memory bandwidth, each node should produce around 14.928 GB/s of bandwidth using a DDR4-1866 module. In fact, it never exceeded 7760 MB/s, which is not a good result. The actual benchmark results in the unmodified upstream stream are even less impressive, as a 4-threaded workload only achieved bandwidth no greater than 15.5% of the maximum available bandwidth, which is much lower than the results of the other clusters. On the one hand, these results demonstrate the Freedom U740’s mediocre memory subsystem, but on the other hand, they also show that software optimizations could improve things.

In terms of energy consumption, the Monte Cimone cluster keeps its promises: it is low. For example, the actual power consumption of a SiFive Freedom U740 peaks at 5.935W under CPU-intensive HPL workloads, while in standby it consumes approximately 4.81W.

Summary

The Monte Cimone cluster used by the researchers is perfectly capable of running an HPC software stack and suitable test applications, which is good enough. Also, SiFive’s HiFive Unmatched card and E4 systems indulge in software porting purposes, so the smooth running of NFS, LDAP, SLURM, ExaMon and other programs was a pleasant surprise. Meanwhile, the lack of RDMA support was not.

“To our knowledge, this is the first fully operational RISC-V cluster supporting a basic HPC software stack, proving the maturity of the RISC-V ISA and the first generation of commercially available RISC-V components” , the team wrote in its report. “We also evaluated support for Infiniband network adapters that are recognized by the system, but are not yet capable of supporting RDMA communication.”

But the actual performance of the cluster did not meet expectations. Such effects fell under the condition of the U740’s mediocre performance and capabilities, but software preparation played a role. That said, while HPC software can run on RISC-V based systems, it cannot meet expectations. This will change once the developers optimize the programs for the open source architecture and the appropriate hardware is released.

Indeed, the researchers say their future work involves improving the software stack, adding RDMA support, implementing dynamic power and heat management, and using RISC-based accelerators. v.

As for hardware, SiFive can build SoCs with up to 128 high-performance cores. These CPUs are aimed at data centers and HPC workloads, so expect them to have decent performance scalability and a decent memory subsystem. Additionally, once SiFive enters these markets, it will need to provide compatibility and software optimizations, so expect the chipmaker to encourage software developers to tweak their programs for the RISC-V ISA.

]]>Automate 2022 has returned to being an in-person event after briefly transitioning to a virtual event in 2021. This was my second trade show since joining Vision Systems Design, and it appears trade show attendance is on the rise. rise. There were hand sanitizer stations located around the salon, but no mask mandate, and the crowd made it clear that members of the automation market were ready to see the latest innovations and network in a salon environment. professional.

Innovators Award

Vision Systems Design kicked off the show by announcing the winners of the 2022 Vision Systems Design Innovators Awards. Attendees were able to accept their award and display it on their booth. Now in its eighth year, this program celebrates the innovative technologies, products, and systems found in machine vision and imaging.

This year’s Platinum Honoree was Ambarella’s CV3 AI family of domain controllers. It enables centralized, single-chip processing for multi-sensor perception, including high-resolution vision, radar, ultrasound, and lidar, as well as deep fusion for multiple sensor modalities and AV path planning. The result is robust Level 4 ADAS and L2+ autonomous driving systems with higher levels of environmental awareness. Click on here to see all of this year’s winners.

Tendencies

The whole world is still working to get out of the pandemic. The chip shortage, made public at first due to the shortage of new vehicles, impacted most if not all aspects of automation. Some vendors predicted the discontinuation of chips and planned accordingly, purchasing enough to continue manufacturing their products. The component shortages went beyond chips, however, and as vision system component makers worked to fill orders, prices fluctuated, and continue to fluctuate, when parts were available. Those who planned ahead have now exhausted their supply of components for their products and are now entering the fray, increasing demand for hard-to-find parts due to supply chain challenges. No one can predict exactly how long this will continue, but at least for the foreseeable future, expect longer turnaround times for parts orders for your vision/imaging systems.

Another consequence of the pandemic has been the labor shortage. Businesses have come to rely more on automation as they experience a labor shortage and need to reassign existing employees. Vision systems have often been used to help eliminate human error during inspection. However, they are also essential parts of most automation equipment that factories use to facilitate assembly tasks, among other things. As the need for automation increases, the need for vision/imaging systems will also increase.

Another indicator of automation growth has been the robotics market, particularly in North America. And, if the Automate show was any indication, demand for robots remains high today. What was of particular interest to me was how vision systems are used for robotics and how many companies are building turnkey solutions that include everything a customer would need to hook up a robotic arm and get straight to work. I’ve seen an increase in news about turnkey solutions hitting my desk lately. As usual, I’m sure the use of a turnkey product depends on the application, but I still found the number of solutions, especially on the robotic side, interesting. Almost everyone we spoke to at the show indicated that a huge growth area for them was logistics and warehousing, and robotics plays a huge role there. If you haven’t been approached yet to develop an imaging system for use in a logistics application, it wouldn’t surprise me if you do soon, whether or not it includes robots.

It’s hard to go anywhere these days without hearing about AI in some way. We talk about it all the time in machine vision. Over the past year, I’ve heard many vendors describe how they’re working to make deep learning and AI easier to implement on the front end. At one point during Automate, I visited the Landing AI booth to speak with David Dechow, VP of Landing AI Outreach and Vision Technology, and Andrew Ng, CEO of Landing AI. We were talking about this trend towards easier implementation, when Ng suddenly asked me, “Chris, have you ever built an AI model?” My answer was no, and the next thing I knew I was in front of one of the demo stations at the booth building my first AI model. I can attest that as someone with no experience building AI models, the process is quite straightforward. Obviously there is a bit of a learning curve to use the software, but I figured out what I needed to do quickly and managed to build a model for inspecting the screw heads, identifying which parts were good and which were bad. Dechow reminded me that there is a lot of work on the back end to make the front end so simple. Ng’s message? “If you’ve never built a deep learning model, give it a try,” he said. I mean, if I can do it…..

There were over 500 exhibitors at Automate. Attendee traffic was consistent throughout the day, and exhibitors reported that the leads they received were concrete leads – no tire busters this year!

]]>Microsoft has gifted some of the brave members of the Windows Insiders Dev Channel team with the long-awaited tabbed File Explorer.

“We’re starting to roll out this feature, so it’s not yet available to all Dev Channel Insiders,” the software giant said.

The register was one of the lucky ones and we have Microsoft to commend for the implementation (late as it is). The purpose of the feature is to allow users to work in multiple locations at once in File Explorer via tabs in the title bar.

The feature is in build 25136. Microsoft has also updated the left navigation pane for easier access to frequently used folders as well as general storage. The “This PC” folder is now only for physical assets, while known Windows folders (such as Documents and Pictures) have been moved elsewhere.

“We’d love to hear your feedback on which tab features you’d like to see next,” the Insider team said. We suggest some consistency might be in order – fire up Microsoft Edge, for example, and the tabs paradigm is markedly different. Overloading tabs results in lots of tiny tabs while File Explorer and Windows Terminal adopt a minimum width with arrows to scroll the tabline left and right.

Microsoft’s obsession with widgets continues in this release as well. Along with the weather, users can also get live updates from the sports and financial widgets, as well as news alerts.

In addition to the usual series of fixes (including one to deal with a freeze after a user issued a wsl-shutdown command), Microsoft has updated Notepad and Media Player.

The former received performance improvements when dealing with large chunks of text and native Arm64 support. We had to head to the Microsoft Store to download the update, which is available on all channels, and saw the architecture change from x64 to ARM64 in the task manager. The latter, which is Dev Channel only, has finally added the ability to sort songs and albums in a collection by date added.

As if to emphasize that this is all state-of-the-art, Microsoft has warned that Surface Pro X users will get a black screen when trying to wake from hibernation and will need to power cycle to bring it back to life. their slab. The recommendation for these users is to ignore this version. ®

]]>A European team of university students has tinkered with the first RISC-V supercomputer capable of displaying balanced power consumption and performance.

More importantly, it demonstrates a potential way forward for RISC-V in high-performance computing and, by proxy, a chance for Europe to completely shed dependence on US chip technologies.

The ‘Monte Cimone’ cluster won’t be crunching massive weather simulations or the like anytime soon, as it’s just an experimental machine. That said, it goes to show that the performance sacrifices for lower power envelopes aren’t necessarily as dramatic as many think.

The six-node cluster, built by folks from the University of Bologna and CINECA, Italy’s largest supercomputing center, was part of a wider student cluster competition to showcase various performance elements HPC beyond simple floating point capability. The cluster creation team, called NotOnlyFLOPs, wanted to establish the power-performance profile of RISC-V when using SiFive’s Freedom U740 system-on-chip.

This 2020-era SoC has five 64-bit RISC-V processor cores – four U7 application cores and one S7 system management core – 2MB L2 cache, Gigabit Ethernet, and various peripheral and hardware controllers. It can operate up to about 1.4 GHz.

Here is an overview of the components as well as the feeds and speeds of Monte Cimone:

- Six dual-card servers in a 4.44 cm (1U) high, 42.5 cm wide, 40 cm deep form factor. Each board follows the industry standard Mini-ITX form factor (170mm by 170mm);

- Each board includes a SiFive Freedom U740 SoC and 16 GB of 64-bit DDR memory running at 1866 s MT/s, plus a PCIe Gen 3 x8 bus running at 7.8 GB/s, a gigabit Ethernet port and USB 3.2 Gen interfaces. 1;

- Each node has an M.2 M-key expansion slot occupied by a 1TB NVME 2280 SSD used by the operating system. A microSD card is inserted into each map and used for UEFI boot;

- Two 250W power supplies are integrated inside each node to support hardware and future accelerators and PCIe expansion cards.

A top view of each node, showing the two SiFive Freedom SoC boards

Freedom SoC motherboards are basically HiFive Unmatched cards from SiFive. Two of the six compute nodes are equipped with an Infiniband Host Channel Adapter (HCA), as most supercomputers use it. The goal was to deploy Infiniband at 56 Gb/s to allow RDMA to maximize possible I/O performance.

It’s ambitious for a young architecture and it didn’t happen without a few hiccups. “PCIe Gen 3 lanes are currently supported by the vendor,” the cluster team wrote.

“Preliminary experimental results show that the kernel is able to recognize the device driver and mount the kernel module to manage Mellanox’s OFED stack. We are unable to use the full RDMA capabilities of the HCA due to yet to be identified incompatibilities between the software stack and the kernel driver. Nonetheless, we successfully ran an IB ping test between two cards and between a card and an HPC server, showing that full Infiniband support might be achievable. This is currently a feature under development.

The HPC software stack turned out to be easier than expected. “We ported to Monte Cimone all the essential services needed to run HPC workloads in a production environment, namely NFS, LDAP and the SLURM task scheduler. Porting all the necessary software packages to RISC-V was relatively straightforward, so we can safely say that there are no barriers to exposing Monte Cimone as a computing resource in an HPC installation,” the team noted.

While a remarkable architectural addition to the supercomputing ranks, a RISC-V cluster like this is unlikely to make it to the list of the top 500 fastest systems in the world. Its design specs are like a low-powered workhorse, not a floating-point monster.

As noted by the development team in their detailed report the description of the system, “Monte Cimone is not intended to achieve strong floating-point performance, but it was built with the intention of ‘preparing the pipe’ and exploring the challenges of integrating a RISC-V multi-cluster -nodes capable of delivering a production HPC stack including interconnect, storage, and power monitoring infrastructure on RISC-V hardware.

E4 Computer Engineering served as integrator and partner on the “Monte Cimone” cluster, which will eventually pave the way for further testing of the RISC-V platform itself as well as its ability to Play well with other architectures, an important piece as we are unlikely to see an exascale-class RISC-V system in the next few years at least.

According to E4, “Cimone enables developers to test and validate scientific and technical workloads in a rich software stack, including development tools, libraries for messaging programming, BLAS, FFT, drivers for HS networks and I/O devices. The goal is to achieve a future-ready position capable of approaching and exploiting the features of the RISC-V ISA for scientific and engineering applications and workloads in an operational environment.

Dr. Daniele Cesarini, HPC specialist at CINECA: “As a supercomputing center, we are very interested in RISC-V technology to support the scientific community. We are excited to contribute to the RISC-V ecosystem by supporting the installation and tuning of widely used scientific codes and mathematical libraries to advance the development of high-performance RISC-V processors. We believe that Monte CIMONE will be the harbinger of the next generation of supercomputers based on RISC-V technology and we will continue to work in synergy with E4 Computer Engineering and the University of Bologna to prove that RISC-V is ready for stay in the market. the shoulder of the HPC giants.

There are many RISC-V grants in Europe and in terms of projects, although the fruits of this work may take years to see. Now even Intel is looking to the future of supercomputing. It’s quite a RISC-Y gamble (you saw it coming), but with few native architectural options in Europe, at least picking an early winner is easy.